What is Nvidia GPU?

Nvidia GPU (Graphic Processing Unit) is a specialized electronic circuit designed to rapidly process and manipulate graphics data. Nvidia GPUs are typically found in high-end gaming computers, workstations, and servers. They are used to power complex video games and other graphics-intensive tasks.

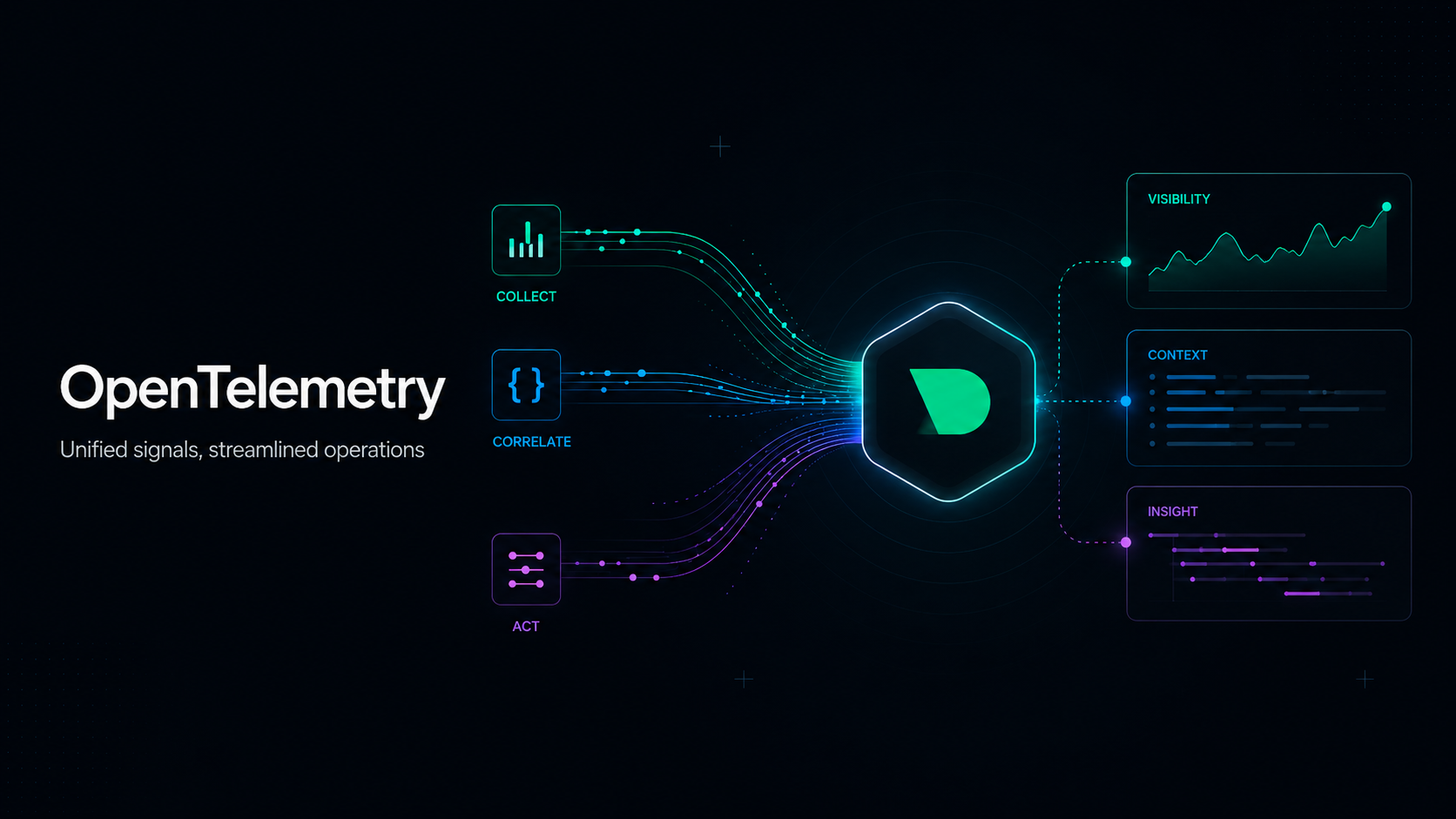

Monitoring Nvidia GPU with Netdata

The prerequisites for monitoring Nvidia GPU with Netdata are to have a system with an Nvidia GPU and Netdata installed on your system.

Netdata auto discovers hundreds of services, and for those it doesn’t turning on manual discovery is a one line configuration. For more information on configuring Netdata for Nvidia GPU monitoring please read the collector documentation.

You should now see the Nvidia GPU section on the Overview tab in Netdata Cloud already populated with charts about all the metrics you care about.

Netdata has a public demo space (no login required) where you can explore different monitoring use-cases and get a feel for Netdata.

What Nvidia GPU metrics are important to monitor - and why?

GPU PCIE Bandwidth Usage

The rate at which data is transferred from the graphics processing unit (GPU) to the computer’s PCIe bus. Monitor GPU PCIE bandwidth usage to ensure that data is being properly transferred and that there is no bottleneck in the GPU’s performance. It is also important to monitor the traffic to ensure that the GPU is not being overwhelmed by too much or too little traffic.

GPU Fan Speed Percentage

The speed at which the GPU’s fan is spinning. Monitor GPU fan speed percentage to ensure that the fan is spinning at an appropriate rate in order to keep the GPU cool and functioning optimally. If the fan speed percentage is too low, it could cause the GPU to overheat and suffer performance issues.

GPU Utilization

GPU utilization is a measure of how much of the GPU’s processing power is being used. Monitor GPU utilization to ensure that the GPU is being used efficiently and that it is not being overworked.

GPU Memory Utilization

GPU memory utilization is a measure of how much of the GPU’s memory is being used. Monitor GPU memory utilization to ensure that the GPU has enough memory available for tasks and that it is not being overworked.

GPU Decoder Utilization

GPU decoder utilization is a measure of how much of the GPU’s decoder is being used. Monitor GPU decoder utilization to ensure that the decoder is being used efficiently and that it is not being overloaded.

GPU Encoder Utilization

GPU encoder utilization is a measure of how much of the GPU’s encoder is being used. Monitor GPU encoder utilization to ensure that the encoder is being used efficiently and that it is not being overloaded.

GPU Frame Buffer Memory Usage

GPU frame buffer memory usage is the measure of the amount of memory that is allocated to the GPU’s frame buffer. Monitor GPU frame buffer memory usage to ensure that the GPU has enough memory available for tasks and that it is not being overworked.

GPU Bar1 Memory Usage

GPU Bar1 memory usage is the measure of the amount of memory that is allocated to the GPU’s Bar1 memory. Monitor GPU Bar1 memory usage to ensure that the GPU has enough memory available for tasks and that it is not being overworked.

GPU Temperature

GPU temperature is the measure of how hot the GPU is running. Monitor GPU temperature to ensure that the GPU is not overheating and that it is running at an acceptable level.

GPU Voltage

GPU voltage is the measure of the amount of electricity that is being sent to the GPU. Monitor GPU voltage to ensure that the GPU is receiving enough power to function properly and that it is not being overworked.

GPU Clock Frequency

GPU clock frequency is the measure of the speed at which the GPU is running. Monitor GPU clock frequency to ensure that the GPU is running at an optimal speed and that it is not being overworked.

GPU Power Draw

GPU power draw is the measure of the amount of electricity that the GPU is consuming. Monitor GPU power draw to ensure that the GPU is not using too much power and that it is not underpowered.

GPU Performance State

GPU performance state is the measure of the current performance level of the GPU. Monitor GPU performance state to ensure that the GPU is running at an optimal level and that it is not being overworked.